ngs / high-throughput sequencing pipeline

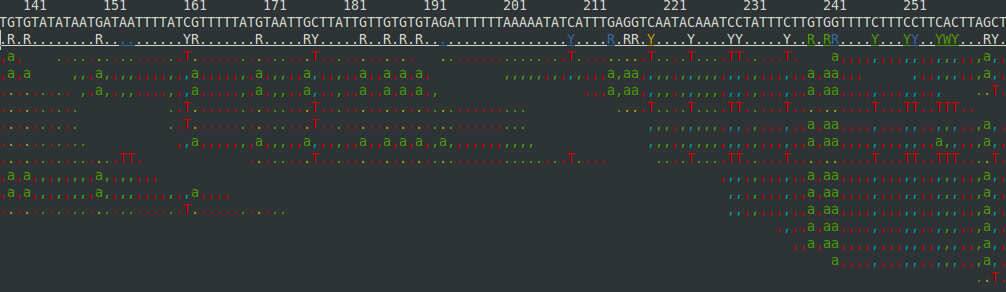

This is the minimal set of preprocessing steps I run on high-throughput sequencing data (mostly from the Illumina sequencers) and then how I prep and view the alignments. If there's something I should add or consider, please let me know. I'll put it in the form of a shell script that assumes you've got this software installed. I'll also assume your data is in the FASTQ format . If it's in illumina's qseq format, you can convert to FastQ with this script by sending a list of qseq files as the command-line arguments. If your data is in color-space, you can just tell bowtie that's the case, but the FASTX stuff below will not apply. This post will assume we're aligning genomic reads to a reference genome. I may cover bisulfite treated reads and RNA-Seq later, but the initial filtering and visualization will be the same. I also assume you're on *Nix or Mac. Setup The programs and files will be declared as follows. #programs fastqc=/usr/local/src/...